Introduction: From AI Experimentation to Enterprise Execution

Large Language Models (LLMs) are rapidly shifting from innovation labs into core enterprise systems. Organizations are no longer asking “What can AI do?” — they are asking “How do we operationalize AI at scale?”

According to McKinsey & Company, generative AI could contribute up to $4.4 trillion annually to the global economy—driven largely by enterprise adoption.

At CloudHew, we help organizations move beyond pilots to production-grade AI systems that integrate with cloud, data, and business workflows.

What Are Large Language Models (LLMs)?

Large Language Models are AI systems trained on massive datasets to understand and generate human language using transformer-based architectures.

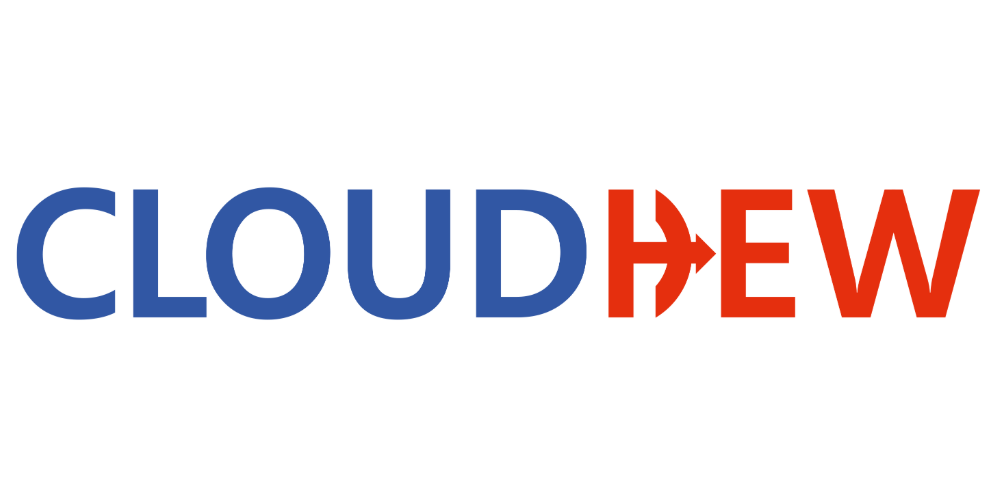

Core Capabilities

- Natural language understanding (NLU)

- Text generation & summarization

- Code generation

- Conversational AI

- Semantic search

Popular LLM Platforms

- OpenAI (GPT models)

- Google (Gemini)

- Meta (LLaMA)

- Anthropic (Claude)

How LLMs Work in Enterprise Systems

LLMs operate across three stages:

- Pre-training on large-scale datasets

- Fine-tuning for domain-specific intelligence

- Inference using prompts and contextual inputs

Modern enterprise architectures extend this with:

- Retrieval-Augmented Generation (RAG)

- Vector databases

- API orchestration

- Governance layers

Top Enterprise Use Cases of LLMs

1. Intelligent Customer Support Automation

LLMs enable context-aware, human-like support systems that significantly outperform traditional chatbots.

Key Capabilities:

- Multi-turn conversations

- Automated ticket resolution

- Real-time translation

- Knowledge base integration

Impact:

- Up to 60% cost reduction in support operations

- Faster resolution times

- Improved CSAT scores

2. AI-Powered Content Generation at Scale

LLMs are transforming marketing and content workflows.

Applications:

- Blog generation

- SEO content creation

- Email campaigns

- Product descriptions

Enterprise Value:

- 5–10x faster content production

- Consistent brand voice

- Reduced operational cost

3. AI-Assisted Software Development (Vibe Coding)

LLMs are redefining development through AI-assisted engineering workflows.

Use Cases:

- Code generation

- Debugging assistance

- Documentation automation

- Test case generation

Outcome:

- 30–50% faster development cycles

- Improved code quality

- Reduced engineering overhead

4. Enterprise Knowledge Management & Semantic Search

LLMs unlock insights from unstructured enterprise data.

Capabilities:

- Semantic document search

- Context-aware retrieval

- Internal AI assistants

Example:

- Legal document assistant

- HR policy chatbot

- Technical knowledge navigator

5. AI Agents & Autonomous Workflows

LLMs are evolving into agentic AI systems capable of executing workflows.

Applications:

- Procurement automation

- Sales assistants

- IT operations automation

Enterprise Impact:

- End-to-end automation

- Faster execution cycles

- Reduced manual dependency

6. AI-Driven Data Analysis & Business Intelligence

LLMs democratize access to data.

Capabilities:

- Query data using natural language

- Automated reporting

- Insight extraction

According to Gartner, augmented analytics powered by AI will become the dominant model for data consumption.

Real-World Enterprise Applications

| Industry | Use Case | Business Outcome |

| BFSI | Fraud detection assistants | Reduced risk, faster decisions |

| Healthcare | Clinical documentation AI | Improved efficiency |

| Retail | Personalized AI assistants | Increased conversions |

| Manufacturing | Predictive insights | Reduced downtime |

| SaaS | AI copilots | Enhanced user experience |

Challenges in LLM Adoption

1. Data Privacy & Compliance

- Regulatory frameworks (GDPR, HIPAA)

- Sensitive data exposure

2. Hallucination & Accuracy

- Incorrect outputs

- Lack of explainability

3. Integration Complexity

- Legacy system dependencies

- API orchestration

4. Cost & Scalability

- High compute costs

- Model optimization challenges

Best Practices for Enterprise LLM Implementation

1. Focus on High-ROI Use Cases

Start where measurable impact is clear.

2. Implement RAG Architecture

Ground AI outputs in enterprise data.

3. Apply Governance Frameworks

- Prompt control

- Output validation

- Access management

4. Optimize Cost & Performance

- Model selection strategy

- Token optimization

5. Continuous Monitoring & Feedback

- Performance tracking

- AI drift detection

How CloudHew Enables Enterprise AI Transformation

CloudHew delivers:

- AI Strategy & Consulting

- Custom LLM Application Development

- RAG-based Knowledge Systems

- AI Agents & Workflow Automation

- Secure Cloud Deployment (Azure & AWS)

- Governance & Compliance Frameworks

Future of LLMs: The Next Phase of Enterprise AI

- Agentic AI ecosystems

- Multi-modal intelligence (text + image + video)

- Domain-specific LLMs

- Autonomous enterprise systems

According to PwC, AI could contribute $15.7 trillion to the global economy by 2030, making it one of the most transformative technologies of this decade.

Conclusion

Large Language Models are not just tools—they are becoming core infrastructure for modern enterprises.

The competitive advantage will not come from adopting AI, but from operationalizing it with governance, data integration, and measurable business outcomes.

FAQs

What are Large Language Models (LLMs)?

LLMs are AI systems trained on massive datasets to understand and generate human language for applications like chatbots, automation, and analytics.

What are examples of LLMs?

Examples include GPT models, Google Gemini, Meta LLaMA, and Anthropic Claude.

How are LLMs used in enterprises?

They are used for customer support, automation, analytics, software development, and decision-making systems.

What is RAG in LLMs?

Retrieval-Augmented Generation (RAG) improves LLM accuracy by combining AI with enterprise data sources.

What are the risks of LLMs?

Key risks include data privacy issues, hallucinations, integration challenges, and cost management.